On EN 50716, the latest software standard for safety-critical railway applications

For over 20 years, Systerel has been recognized for its expertise in developing safety-critical software, particularly in the railway sector where the EN 50128 standard has been the reference. It will be definitively withdrawn by 2026, in favor of the EN 50716 standard, which ensures continuity with its predecessor while bringing its share of improvements and new features.

In this article, we propose to revisit the context, then provide and share some reflections on EN 50716.

Before EN 50716, EN 50128¶

Standards frameworks don’t change every day. The development cycles of standards, from design to publication, are themselves relatively long.

The arrival of a new version of a standard is therefore an event. It is an opportunity to take the time to review the state of the art in techniques, current practices and recommendations, in order to improve developments and drive ever-increasing product quality.

Software developed under safety constraints is no exception to the rule.

For railway applications, and more particularly signaling systems, they have historically been, and still are today, governed by the EN 50128 standard. The first version of this standard was published in 2001, its first major revision in 2011, and its last amendment (A2, with quite minor impact) in 2020. That is a decade between two evolutions.

In parallel, still within the framework of railway applications, the EN 50657 standard was published in 2017, this time applicable to rolling stock software. Modeled on the EN 50128 (2011) standard, it brings a number of corrections and evolutions. These were however not fully reintegrated into the A2:2020 amendment of EN 50128.1

It was therefore time for a major revision of EN 50128, in light of lessons learned, development practices, and new technologies and tools that have emerged in recent years.

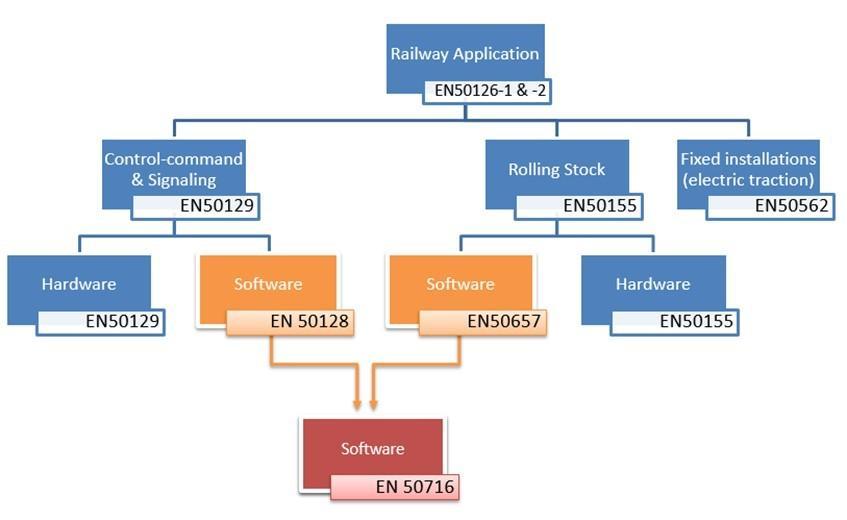

Towards a unified software standards framework for railway applications¶

EN 50716 thus arrived on our shelves in the last quarter of 2023, three years after the start of its development. It supersedes EN 50128 AND EN 50657, in order to have a single, harmonized framework for safety-critical software development across all2 railway applications.

The three standards will coexist until the end of October 2026. Any development started before then may comply with one or the other standard, but past that date, EN 50716 will be the only accepted reference.

Just as EN 50657 had modeled its structure on EN 50128, EN 50716 follows the organization of its predecessors: division into sections, requirement numbering3, technique tables with recommendation levels, role and responsibility description sheets. The transition from a previous framework can thus be accomplished smoothly.

Several improvements, and an entirely new annex¶

Fundamentally, the normative content4 has changed little. It can be estimated, as an order of magnitude, that 30% to 40% of requirements have been impacted compared to EN 50128, but the modifications are largely a matter of rewording or added precision.

Among the significant changes, the following are particularly noteworthy5:

-

A simplification pass on Article 5 presenting organizational constraints, which however leaves the constraints largely unchanged,

-

A revision of Article 8 dealing with application data, notably introducing new documents, but now excluding “application algorithms”6,

-

A reorganization pass on Annex A, to distribute methods across recommendation tables in a manner more consistent with the lifecycle, and to revisit techniques related to programming languages and coding standards,

-

The removal of the Integration Manager role, whose responsibilities regarding integration testing activities (software/software or software/hardware) are taken over by the Test Manager.

Within the scope of this article, we will not detail these modifications further, but rather focus on Annex C, which has informative content.

This annex has been completely transformed in EN 50716: formerly a checklist for documentation control (identifying for each document the Author, 1st Reviewer, 2nd Reviewer), it now offers recommendations for software development, around 3 topics:

-

Lifecycle models

-

The use of modeling in the lifecycle

-

Artificial Intelligence, particularly Machine Learning

Let us revisit together the three topics addressed by this annex.

Iterative lifecycles, a deliberate rather than reactive approach¶

The lifecycle model proposed by the standard is linear7: phases follow one another from specification to validation, with the outputs of one phase being the inputs of the next. The standard does not preclude loops: verification and testing activities are notably aimed at detecting errors as early as possible, triggering a step back in the cycle to correct the error. These loops would not occur in a perfect development, and are not planned when setting up the lifecycle, phases and deliverables.

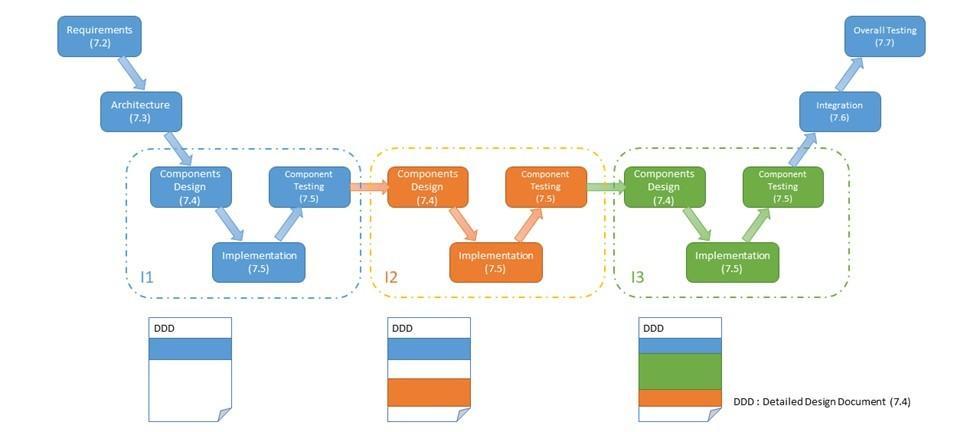

The opening proposed in Annex C of EN 50716 is to plan iterations, in order to incrementally build the expected products8. It should be understood here that the phases governed by the standard remain unchanged, but that one can choose to repeat, or even parallelize, a set of successive phases. It will then be necessary to define the expected output of each iteration; verification and validation activities apply normally to each phase of each iteration. It can be noted that this practice is already widespread among industry players.

Operationally speaking, this cycle helps reduce waiting times or enables teams to work in parallel, and provides early feedback on the outputs of the initial phases. However, the incremental treatment of the software scope could introduce late major changes, if highly impactful requirements are addressed during the last iterations. Good control over the breakdown of expected outputs and the risks associated with the implementation of each sub-part is necessary to judiciously build an iterative cycle.

Modeling, a practice encouraged and governed by the standard¶

Models, modeling, formal methods¶

A model is a logical representation of concepts, which provides additional formalism compared to a natural language description. It comes with a textual or graphical representation, with partially (semi-formal modeling, such as UML/SysML) or completely defined semantics (formal modeling, such as temporal logic or the B method).

The more complete the semantics, the more models, through their mathematical foundation, contribute to achieving a high level of rigor, requirement disambiguation, facilitation of verification, and early detection of errors and inconsistencies. Formal methods are the techniques for manipulating, analyzing or verifying these models. As an example, proof techniques can analyze and validate a program or model against safety properties, without executing it.

Note that implementing modeling may require additional expertise, costs and timelines compared to non-formal descriptions. But this investment can prove worthwhile given the possibilities offered by the use of models (early defect discovery, automatic code generation, document generation, verification acceleration, reduced testing needs, …)

Towards use throughout the lifecycle¶

Modeling was already fully considered during the specification phase, as a complement to natural language description of requirements. Annex C of EN 50716 presents how the use of models can be extended to all activities planned in the lifecycle. Generally speaking, a model is comparable to a document. It can therefore fulfill the expectations and substitute for an output of a phase. In particular, a model can be used to automatically generate Source Code (relying on a validated translator). However, the Annex A techniques must then be adapted to apply to the model. Notably:

-

Techniques applicable to programming languages must be transposed to the languages of the models used,

-

Coding standards and guidelines are replaced by modeling standards and guidelines,

-

The desirable properties of Source Code (balance of size/complexity, readability, comprehensibility, testability, …) are transposed to the model,

-

Code coverage by tests must be replaced by criteria specific to the chosen modeling approach in order to cover all parts of the model.

To mention other use cases, models can support testing activities (scenario generation, back-to-back testing, simulation, …), or verification activities (property proving). The choice of a modeling approach, and the transposition of Annex A techniques must be carefully considered for each activity involving models.

In all cases, the models used will be subject to the same requirements as the documentary outputs expected by the standard, namely verification, validation, or independence constraints where relevant. Tools related to models are also subject to the standard’s requirements (notably T3 code generators).

Artificial intelligence, still many challenges ahead¶

In EN 50128, AI is considered in a use case restricted to defect prediction and correction, with a maintenance orientation. This technique is however classified at a Not Recommended level9.

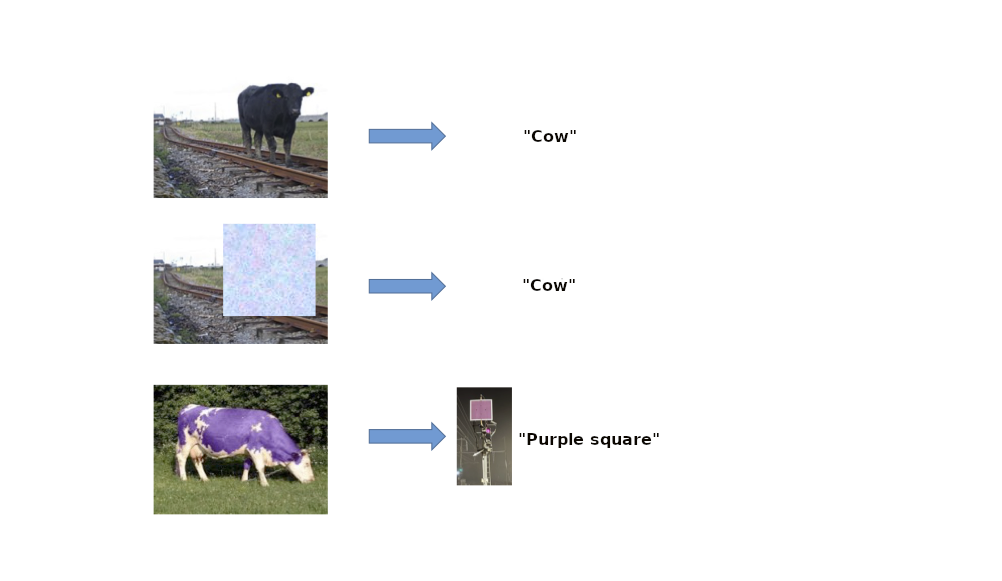

The explosion of AI in recent years brings with it new applications, such as lineside signaling recognition or trackside obstacle detection. These applications are based on software trained from training datasets. Thus, EN 50716 generalizes to software subject to Machine Learning. The technique however remains classified at a Not Recommended level!

Indeed, the standard imposes a set of traceability, verification and testing activities to thoroughly control the software code and ensure that its actions meet the expressed need without compromising safety. For trained software, whose internal structure may be unknown or incomprehensible to human reasoning, traceability, static code analysis or test coverage estimation activities may become infeasible.

Four main challenges are currently identified in EN 50716 as limiting factors for fully accepting trained software in high safety-critical applications. It is not certain that they will ever be resolved.

-

How to guarantee that training data is representative of all cases likely to be encountered by the software in operation?

-

How to verify/test/analyze the structure of the software?

-

How to validate that the software has learned the “right” function10?

-

How to reduce sensitivity to “adversarial” attacks? (These consist of causing large variations in the model’s outputs through minor variations in the inputs)

Conclusion and outlook¶

The transition from EN 50128 to EN 50716 should prove smooth given the strong continuity between the two standards. The major changes introduced, whether related to organization and roles, technique tables, or application data development, are in a perspective of continuous improvement and simplification. The new Annex C encourages the use of iterative cycles, modeling throughout the lifecycle, and begins to consider (without recommending it for now) the use of software built through machine learning.

The publication of this standard is an opportunity for Systerel to reaffirm its expertise in safety-critical software development, and its ability to support its clients within the EN 50716 standards framework. In particular, Systerel has a well-established culture of modeling techniques and formal methods (B language, proof techniques), and is also embracing techniques brought by AI (and the dependability questions it raises).

Finally, note that the EN 50716 standard will be reviewed five years after its publication, i.e., at the end of 2028. We will follow the evolution of techniques with close attention until then.

-

The underlying motivation for this amendment is the alignment of EN 50128 with the related standards 50126-1 (2017), 50126-2 (2017) and 50129 (2018), in order to obtain a coherent framework. Notably, the concept of SIL0 is replaced by Basic Integrity. ↩

-

With the exception of so-called “Fixed Installations” applications, which primarily concern traction power supply. See EN 50562. ↩

-

Notably leaving some requirements intentionally blank! ↩

-

As opposed to informative content ↩

-

Non-exhaustive list - the modifications presented in this list were selected according to the author’s judgment ↩

-

These algorithms are now considered as full-fledged software, and are subject to the complete Article 7 lifecycle, rather than the lightweight process specific to configuration, covered by Article 8 ↩

-

The so-called “V” cycle is a linear cycle! The V layout is simply a visual aid to align a design phase with a test phase at the same level. ↩

-

The resemblance to “agile” development is striking, although not explicit in the standard ↩

-

In other words, a solid argument will be expected to justify the use of this technique ↩

-

The model may have been pre-trained on non-specific datasets (e.g., all sorts of traffic lights), before moving on to specific datasets (French railway network signals). How to be convinced that the software is suited to the specific application? (And that it does not recognize a permissive signal in the green light of the neighboring national road) ↩